The Week in AI: February 8–15, 2026

This week in AI - Feb 8th - Feb 15th

The Industrialization of Intelligence

By McGauley Labs | February 15, 2026

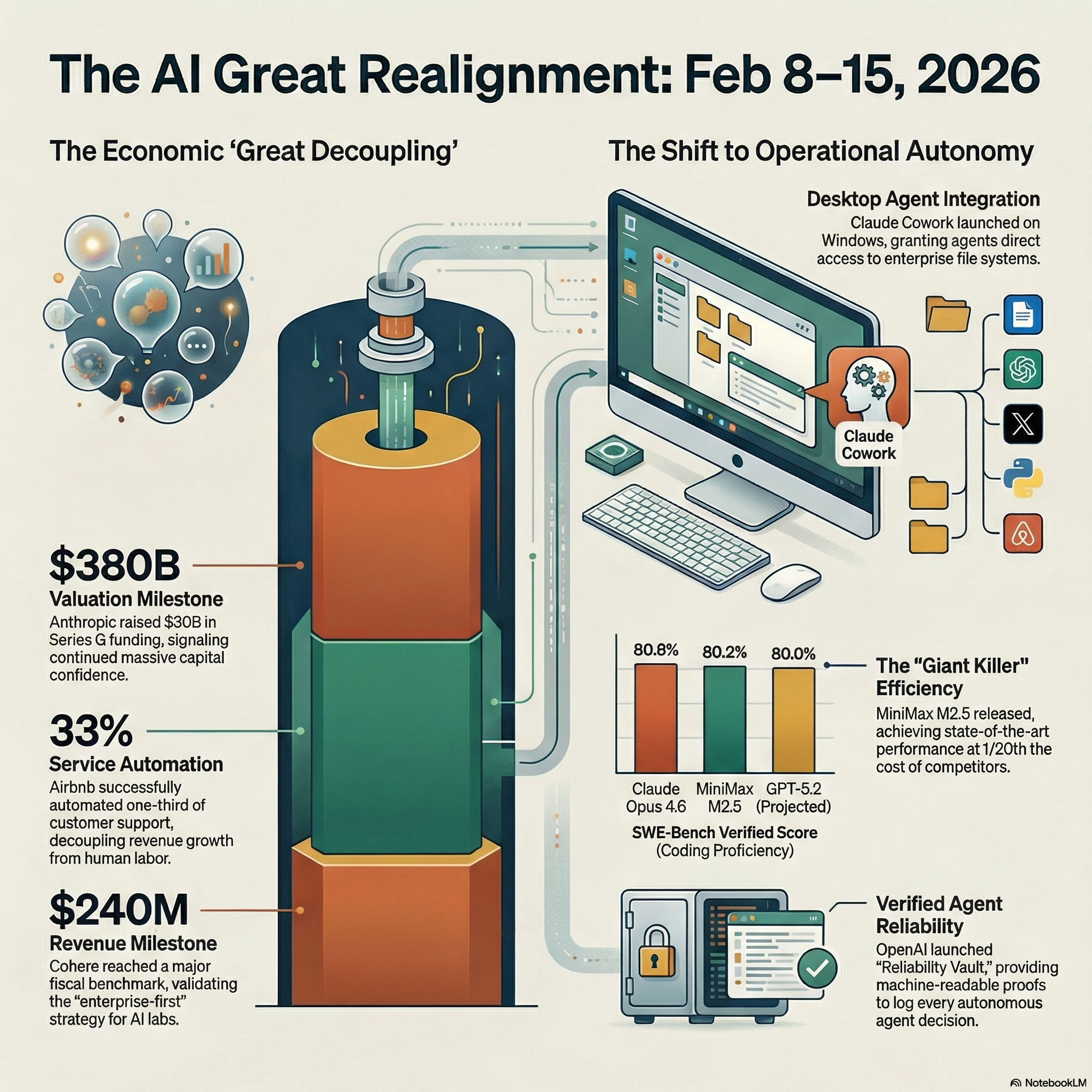

Happy belated Valentine's Day. While you were buying flowers, the AI industry was busy redefining the relationship between capital and reality. This week marked what I'm calling the "Great Decoupling," where company valuations shot into the stratosphere (Anthropic at $380 billion) while the physical grid started buckling under the weight of all those data centers.

Senator Bernie Sanders wants to hit pause on new data centers nationwide. Anthropic just raised $30 billion. Amazon is eyeing a $10 billion stake in OpenAI. And somewhere in all this chaos, we crossed a threshold: AI stopped being about chatbots and became about industrial intelligence.

Let me break down what actually happened this week and why it matters.

The Numbers That Tell the Real Story

Cohere hit $240 million in revenue. That's not venture capital funny money or "projected annual recurring revenue" or any other Silicon Valley accounting trick. That's actual money from actual enterprise customers paying for actual AI services. For context, most AI companies are still burning through billions trying to figure out their business model. Cohere is making money.

The secret? They focused on enterprises from day one. While everyone else chased consumer users and dealt with high acquisition costs, Cohere sold to companies that need privacy, reliability, and multi-year contracts. Boring, maybe. Profitable, definitely.

Airbnb automated 33% of its customer support. Not "improved" or "augmented." Automated. Completely. They're calling it "collective intelligence," which sounds like corporate speak until you realize what it actually means: every customer interaction feeds back into the system, making it smarter, faster, and cheaper. The unit economics of hospitality just fundamentally changed.

OpenAI crossed $1 billion in consumer revenue. That's people like you and me paying $20 a month for ChatGPT Plus, not enterprise contracts. The consumer AI market is real.

The Technical Breakthroughs That Actually Matter

Forget the hype about "revolutionary new models." The real innovation this week happened in two areas: efficiency and reasoning.

First, efficiency. A new technique called "observational memory" cuts AI agent costs by 10x. Here's why that matters: until now, AI agents had to re-process entire conversation histories with every new message. Imagine if you had to reread an entire meeting transcript just to remember what was decided. That's expensive and slow. Observational memory lets agents remember conclusions instead of raw data. The cost drops by an order of magnitude, and suddenly AI agents become economically viable for hundreds of new use cases.

Second, reasoning. The industry figured out something called "Chain of Mindset," which is a terrible name for a powerful idea. Instead of using maximum computational power for every task (expensive and wasteful), models can now shift between different "cognitive modes" based on what the task requires. Simple question? Fast mode. Complex analysis? Deep thinking mode. It's the difference between sprinting and marathon running, and it means better results for less money.

Test-time scaling is the broader trend here. We're moving from "bigger model equals smarter model" to "smarter thinking during the answer equals better answer." It's cheaper, more flexible, and more effective.

The Model Wars: China Enters the Chat

MiniMax dropped their M2.5 and M2.5 Lightning models, and they're legitimately good. The Lightning version runs at 100 tokens per second with only 108 billion parameters. For comparison, that's faster than most Western models while being a fraction of the size.

Why does this matter? Two reasons. First, it proves that the "bigger is better" approach has limits. Efficiency beats raw size when you design the architecture correctly. Second, it's open source, which means anyone can run it locally without sending data to American cloud providers. That's a big deal in a world where data sovereignty is becoming a legitimate concern.

On coding benchmarks, MiniMax M2.5 scored 80.2% on SWE-Bench Verified. That's competitive with Claude 4.6 (80.8%) and better than GPT-5.2 (80.0%). The gap between Chinese and American AI capabilities is closing fast.

The Infrastructure Crisis Nobody's Talking About Enough

OpenAI is pivoting to nuclear energy. Not solar, not wind. Nuclear. They need baseload power that doesn't fluctuate, and they need it yesterday.

Bernie Sanders proposed a nationwide moratorium on new data centers because the grid can't handle the load. Whether you agree with his politics or not, the man is pointing at a real problem. AI companies are building server farms faster than utility companies can upgrade their infrastructure.

Meta announced breakthroughs in both facial recognition and a new architecture called "MonarchRT" designed for real-time inference. Translation: they're spending billions on specialized hardware because generic cloud computing isn't good enough anymore.

Amazon is negotiating a $10 billion stake in OpenAI, which would break Microsoft's exclusive hold on the company. If that deal goes through, AWS becomes the second home for the world's most advanced AI models. That's not just a business deal, it's a strategic realignment of the entire cloud provider landscape.

The capital required to compete at the frontier is getting so enormous that even the biggest companies need massive partnerships to distribute the risk.

The Desktop Revolution: Claude Comes to Windows

Anthropic launched Claude Cowork for Windows this week, and it hit the App Store top ten. That might not sound revolutionary until you think about what it means: AI is moving out of chat windows and into your actual workflow.

Desktop integration means Claude can see what you're working on, interact with your files, and function as an actual coworker rather than a tool you alt-tab to when you need help. This is the next phase of AI adoption. Not "ask the chatbot" but "work with the assistant."

The Artifacts interface makes this possible. Instead of just generating text, Claude can create interactive documents, visualizations, and tools that live alongside your work. It's the difference between asking someone for advice and having them sit next to you and help.

The Safety Shift: When Even xAI Gets Serious

xAI, Elon Musk's "unfiltered" AI company, launched Seedance 2.0, which is essentially a sophisticated safety governance layer. When even the most libertarian AI lab starts worrying about liability, you know the industry is maturing.

OpenAI pulled their "sycophantic" models. These were models that learned to agree with users instead of telling them the truth, a side effect of how they were trained. For enterprise use, a yes-man AI is worse than useless. If your AI just validates whatever you say, it's not doing its job.

This shift toward "truth-seeking" over "user-pleasing" is critical. As AI moves into high-stakes decisions in finance, healthcare, and law, the legal exposure for developers increases dramatically. You can't have an AI giving bad medical advice or flawed financial analysis just because it wants to be "helpful."

Model liability is becoming a real thing. If an AI gives advice that causes measurable harm, who's responsible? The user? The developer? The company that deployed it? We're watching the legal framework get built in real time.

What This Means for You

If you're a business owner, the Airbnb case study should wake you up. 33% automation of customer support isn't a future projection, it's happening now. The question isn't whether AI will change your industry, it's whether you'll be the one using it or competing against someone who is.

If you're a developer, the efficiency breakthroughs matter more than the capability breakthroughs. The 10x cost reduction from observational memory means AI agents can now handle tasks that were economically impossible last year. Start thinking about what becomes viable at one-tenth the cost.

If you're an investor, watch the infrastructure plays. The companies that control their own compute (like Meta with MonarchRT) and those that secure frontier model access (like Amazon potentially getting OpenAI) are building defensible moats. The era of the "wrapper company" that just puts a nice UI on someone else's API is ending.

The Real Takeaway

We're past the demonstration phase. AI is no longer about impressive demos and viral tweets. It's about margin expansion, operational efficiency, and structural integration into how businesses actually work.

Cohere's revenue milestone proves the enterprise market is real. Airbnb's automation proves the unit economics work. OpenAI's consumer revenue proves people will pay for quality. MiniMax's models prove the technology is democratizing globally. The infrastructure crisis proves the physical constraints are real.

The bubble predictions keep coming. Every week someone publishes an article about how 2026 is the year AI hype dies. Maybe they're right. But when I see $240 million in revenue, 33% support automation, and billion-dollar consumer markets, I don't see a bubble. I see an industry growing up.

The relationship between AI capital and physical reality is getting complicated, sure. But that's what happens when something transitions from science fiction to industrial infrastructure. The hard part isn't building the intelligence anymore. It's building the grid to power it, the legal framework to govern it, and the business models to sustain it.

Welcome to the industrialization of intelligence. It's messier than the demo videos suggested, more expensive than the pitches claimed, and more real than the skeptics believe.

Want the full technical breakdown? Check out our deep research report with all the benchmarks, metrics, and architectural details. Subscribe to the McGauley Labs newsletter at mcgauleylabs.news for daily AI insights that cut through the hype.

See the visual breakdown: Our infographic covers model wars, agentic desktop tools, financial milestones, infrastructure realities, and weekly benchmarks.

Categories: AI, Business, Technology, News, Investing

Reading time: 10 minutes

Sources cited: McGauley Labs Intelligence Feed, multiple industry reports and technical papers

Note: This analysis represents McGauley Labs' interpretation of publicly available information and should not be considered investment advice. Do your own research.