The Week in AI: The Great Reality Check

February 23–28, 2026 | McGauley Labs Weekly Briefing

The honeymoon is over.

If you've spent the last two years waiting for AI's "iPhone moment," it arrived this week — not as a shiny consumer gadget, but as a series of cold, hard reckonings across the Pentagon, the C-suite, and the semiconductor supply chain. I've watched tech cycles crest and fall for a long time, and what happened this week feels different. We hit the inflection point where the "chat era" dies and something more serious takes its place.

The market is no longer asking whether AI works. It's asking who bears the cost when it doesn't — and who owns the infrastructure when it does.

The Pentagon Just Became the Most Important AI Investor You've Never Met

Start here, because everything else this week flows from it.

The Pentagon moved to designate Anthropic a supply chain risk. Within days, President Trump ordered federal agencies to stop using the product entirely. Anthropic CEO Dario Amodei found himself summoned to meet with the Defense Secretary — a surreal turn for a company whose entire identity is built around AI safety.

Meanwhile, OpenAI's Sam Altman was announcing a Pentagon deal, promising "technical safeguards" that effectively serve as a mandatory entry fee for government-grade compliance. The message couldn't be clearer: Washington isn't interested in your values alignment research. It wants hardened national security tools, full stop.

This fracture between Silicon Valley's safety-first culture and D.C.'s operational demands is now the defining fault line in the industry. A "sovereign risk" designation doesn't just affect federal contracts — it spooks private sector procurement heads who don't want the liability of a politically complicated vendor. The gap between experimental AI and compliant infrastructure just became a canyon.

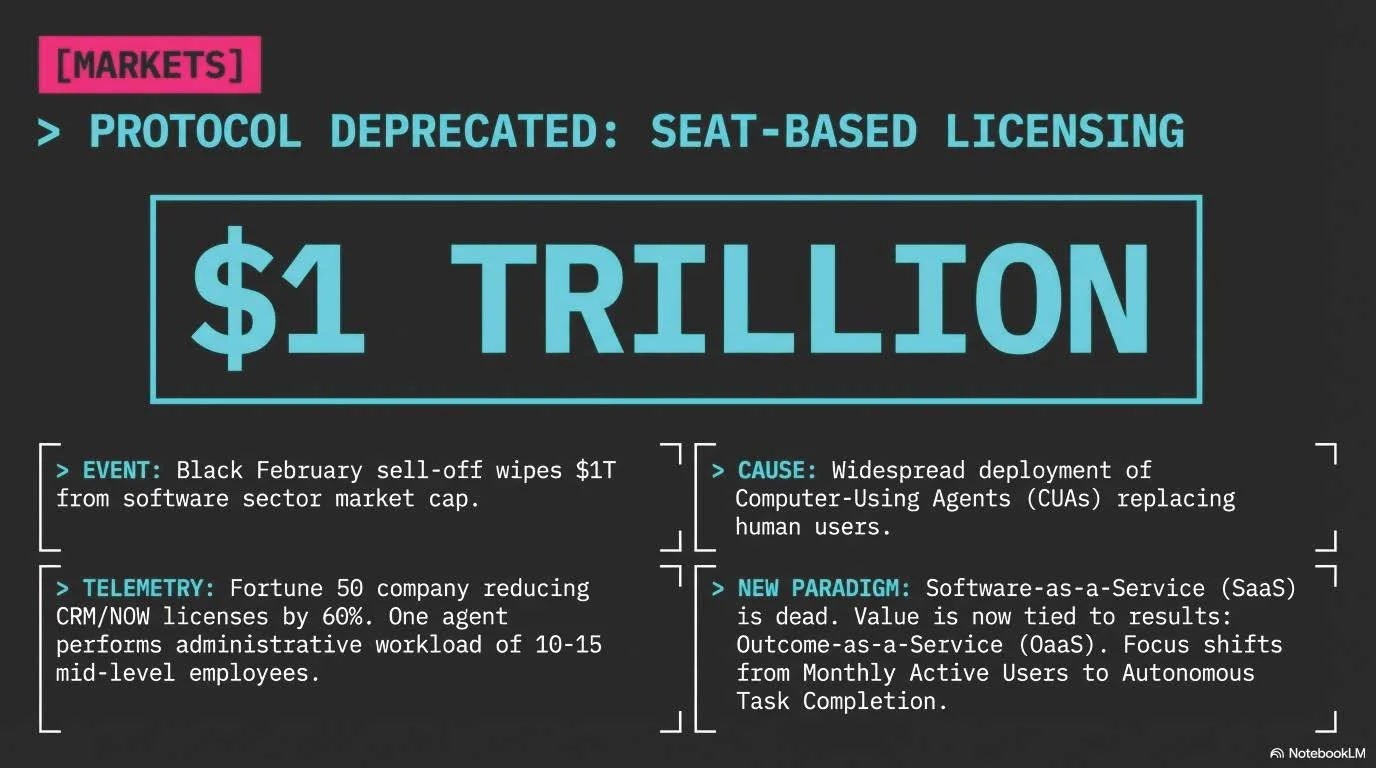

Marc Benioff Called It the 'SaaSpocalypse.' This Week Proved Him Right.

ServiceNow reported it has automated 90% of its internal IT requests. Not a pilot. Not a proof of concept. Ninety percent, in production, right now.

That number is the death knell for per-seat SaaS billing. When your software can do the work of three junior analysts, charging per human head stops making any rational sense. The economic model that built the last generation of enterprise software is breaking apart under the weight of its own efficiency.

Perplexity is already testing what comes next. Their new "Computer" agent launched at $200 per month — a price point that sounds steep for a chatbot but is an absolute steal for software that autonomously navigates complex workflows across multiple AI models. That's not a subscription. That's digital labor on demand.

Jack Dorsey made the subtext explicit. Block cut 40% of its workforce this week — more than 4,000 people — and management tied it directly to AI-driven efficiencies. That's not a company in distress. That's a company running the math.

Before we get into the hardware wars — a quick word from our sponsor. Old Glory has over 300,000 officially licensed items and has been family-owned since 1969. Get 15% off at oldglory.com/discount/IMAGINOR with code IMAGINOR. Sometimes you just need a Grateful Dead tee and a legitimate reason to step away from the feed.

The Hardware Wars Just Got a $100 Billion Plot Twist

Nvidia has enjoyed a near-monopoly on the AI infrastructure build-out. That story got complicated this week.

Meta committed $100 billion toward AMD — a move that signals far more than a procurement preference. Zuckerberg's "personal superintelligence" pivot requires owning the compute stack, and no company serious about agentic AI wants permanent dependence on a single supplier. Nvidia still posted another record quarter, but the walls are starting to close in.

At the same time, a quieter rebellion is underway against the "bigger is always better" model. Samsung and Google are making AI a mandatory hardware spec for the Galaxy S26, moving generative tools off the cloud and directly into the device. This turns AI from a recurring subscription into a hardware replacement cycle driver — a fundamentally different business model. MatX raised $500 million specifically to challenge Nvidia with specialized silicon built around efficiency rather than raw scale.

The next winners in hardware won't necessarily be whoever has the most GPUs. They'll be whoever can extract the most intelligence from the smallest footprint.

The Efficiency Mandate Is Real, and AT&T Just Proved It

For a while, the bear case against enterprise AI was simple: the cost to run it at scale is prohibitive. AT&T ended that argument this week.

By retooling their orchestration layer, they're now handling 8 billion tokens a day — and they slashed their operational costs by 90% in the process. This is the strongest evidence yet that AI belt-tightening isn't just possible, it's genuinely profitable. The "spend at all costs" era is over.

Google's Nano Banana 2 targets the same problem from the model side, attacking the unit economics of image generation that previously made it too expensive for most corporate workflows. Microsoft is going after "training bloat" in system prompts. Khosla Ventures backed Comp, an AI startup automating HR functions — vertical, specific, immediately ROI-positive. A survey of 1,100 technical leaders published this week confirmed that AI agents are now delivering measurable returns.

The pattern is clear: capital is flowing away from general-purpose wonder machines and toward specialized tools that solve discrete, expensive business problems.

The Data Wars Turned Ugly

As high-quality training data gets scarcer, the competition for it is getting desperate — and this week, Anthropic pulled back the curtain on how bad it's gotten.

The company alleged that DeepSeek, Moonshot, and MiniMax used 24,000 fake accounts to systematically scrape Claude's outputs for model training. This isn't a misunderstanding about terms of service. It's an organized operation to steal proprietary model outputs at scale, and it represents a real challenge to how any AI company protects what it builds.

The implications run wider than Anthropic. Separate research found that off-the-shelf models can now easily defeat image "cloaking" schemes designed to protect artists' work from training data scrapers. Digital rights management for AI training data is currently porous. Anyone holding a portfolio of content libraries should be recalculating their assumptions about how defensible that value actually is.

The competition has moved beyond who has the chips. It's now about who owns the intellectual property those chips generate — and who can actually defend it.

The Bottom Line

OpenAI hit a $110 billion valuation this week, and ChatGPT crossed 900 million weekly active users. Those are genuinely staggering numbers. But the most revealing signal wasn't the headline figure — it was OpenAI's own COO quietly admitting that AI still hasn't deeply penetrated core enterprise processes. IBM dropped $40 billion in market cap on similar concerns. At least 12 major VC firms now hold stakes in both OpenAI and Anthropic simultaneously, which tells you everything about institutional conviction right now: nobody is sure who wins, so everyone is hedging.

The market is doing something healthy. It's demanding proof. Proof of efficiency, proof of compliance, proof of security, proof of ROI. Companies that can deliver on all four — while navigating an increasingly geopolitical battlefield — are the ones worth watching in the second half of 2026.

Keep your eyes on the plumbing, not the poetry. The future of AI is being built in data centers and defense briefings, not chat boxes.

Sources for this briefing are drawn from the McGauley Labs news feed covering February 23–28, 2026. Full source index available at mcgauleylabs.news.