When Bots Became the Boss:

India's $210B AI Bet, The 80% Price Collapse, and the Marketplace Where Robots Hire Humans

When Bots Became the Boss: India's $210B AI Bet, The 80% Price Collapse, and the Marketplace Where Robots Hire Humans

Published: February 22, 2026 | McGauley Labs | This Week in AI

The McGauley Labs weekly deep dive — no hype, just what actually happened between Feb 15–22 and what it means for anyone paying attention. Pull up a coffee.

This Week in AI | Feb 15–22, 2026 | The $210B Infrastructure Pivot & The Agentic Shift

The AI Industry Just Hit a Wall. A Physical One.

The AI story stopped being a software story this week.

Not in some vague, future-tense, "things are changing" sense. I mean the actual capital flows — where the billions are going, what's getting built, and who's controlling the build — shifted in a direction that makes the last three years of model releases look like preamble. Five things happened between February 15 and 22 that, taken together, show you where this industry actually is. Not where the press releases say it is. Where it is.

The through-line across all of it: whoever controls the physical infrastructure controls the intelligence. And right now, the scramble to control that infrastructure is on.

The Infrastructure Gold Rush

"$210 Billion | India Shifts East | The Physical Gravity"

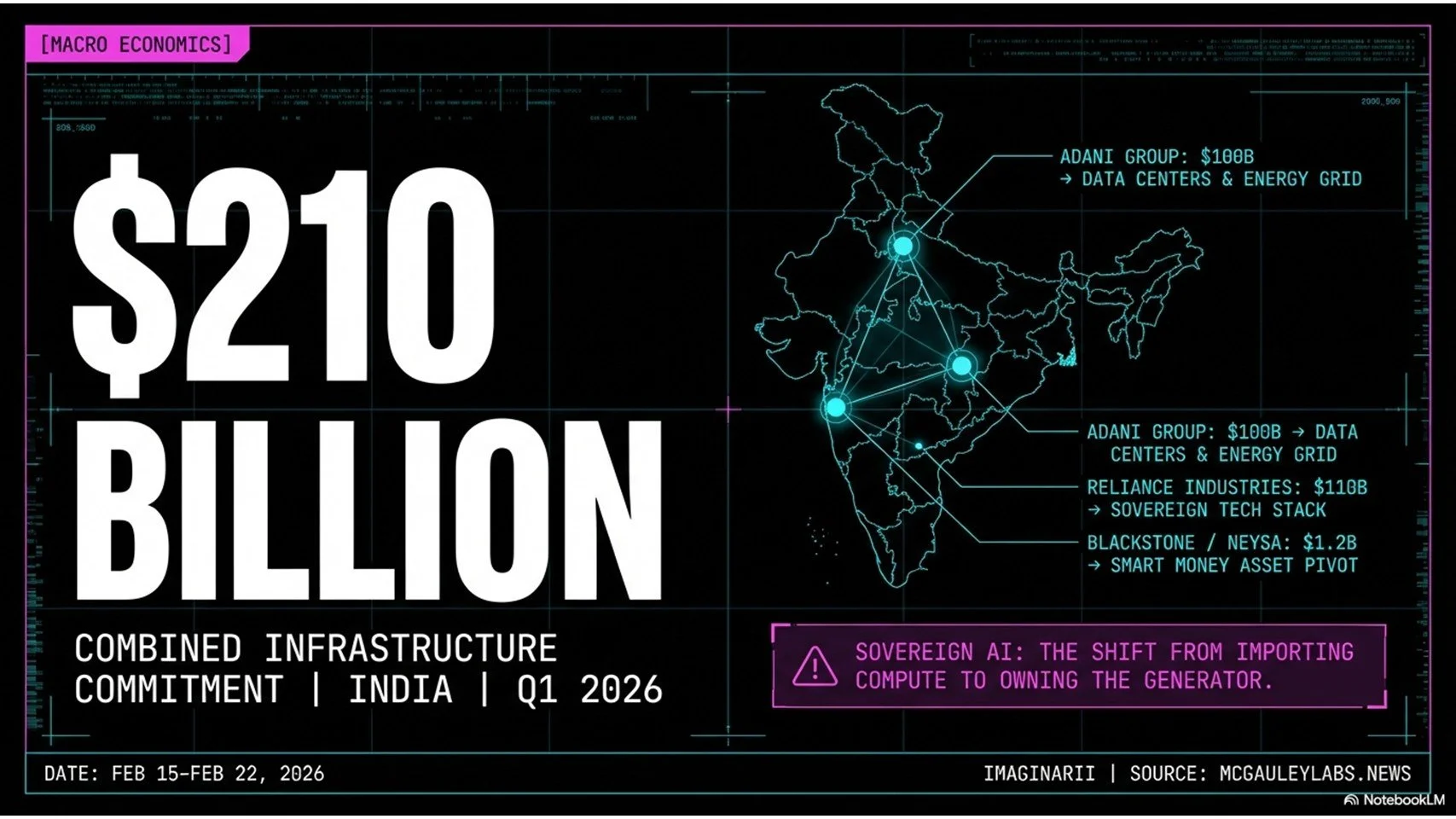

Two hundred and ten billion dollars. That's the combined number from just two Indian companies this week — Adani Group pledging $100 billion for AI data centers and energy infrastructure, Reliance Industries committing $110 billion to their own tech ambitions. India is targeting $200 billion in total AI infrastructure investment by 2028, and based on this week's announcements, they're ahead of schedule.

The phrase you're going to hear constantly through 2026 is sovereign AI. The idea is direct: if artificial intelligence is the electricity of the 21st century, you cannot afford to import it from a tech company in San Francisco subject to US export controls and shifting foreign policy. Adani and Reliance aren't just building data centers. They're building the sovereign cloud for India — compute on Indian soil, under Indian law, answerable to no one in Silicon Valley.

Blackstone's move into this space tells you everything about where the money thinks this is going. Private equity doesn't bet on possibilities; it bets on predictable cash flows and hard infrastructure. Their commitment of up to $1.2 billion backing Nisa, a platform that manages heavy AI workloads, signals that the industry has crossed from venture-style speculation into genuine infrastructure maturity. When Blackstone moves from software wrappers to pipes, the build phase is real.

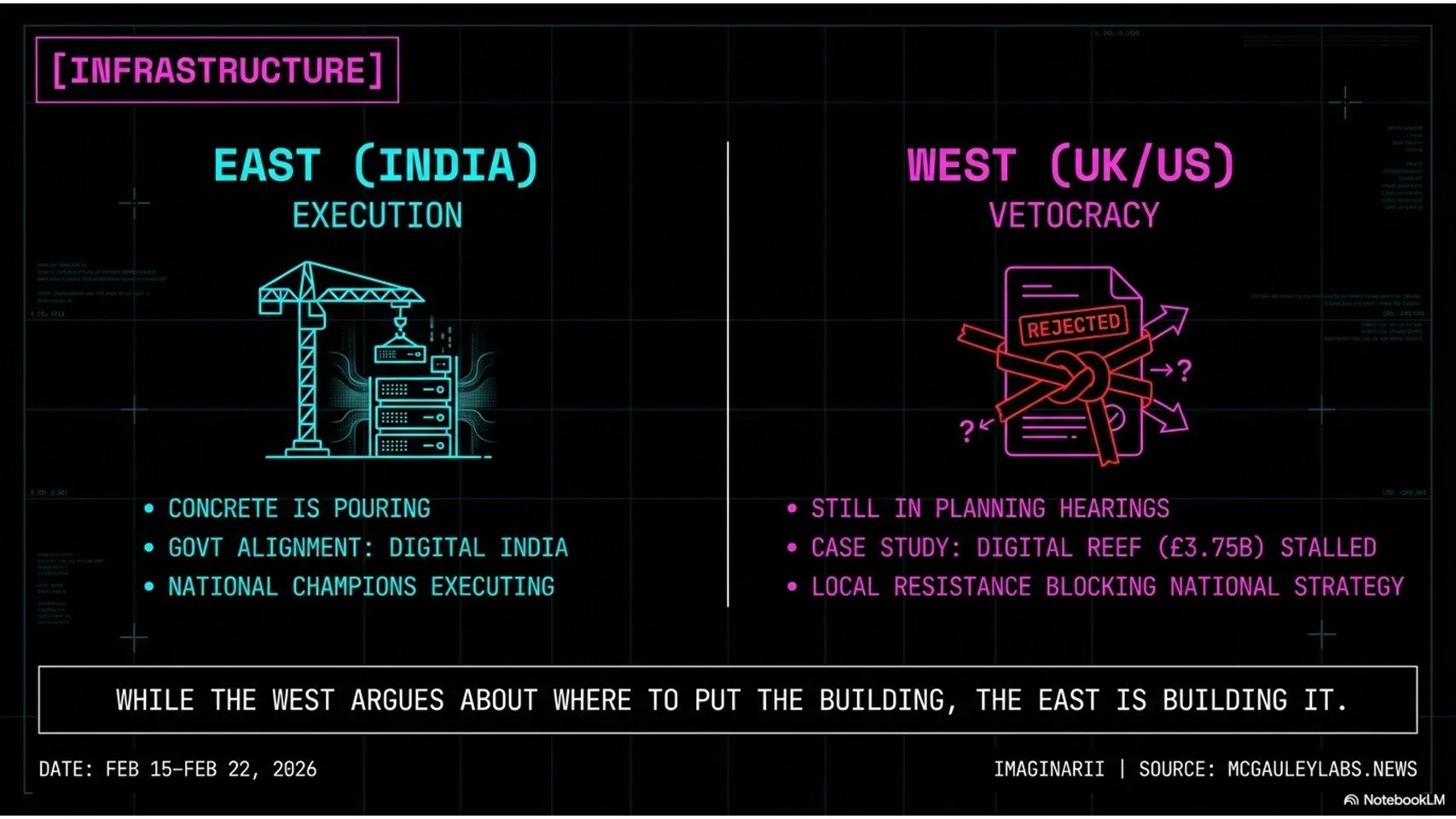

The contrast with the West is stark and getting starker. Digital Reef wants to build a £3.75 billion data center in Havering, UK. It's stalled behind local opposition, zoning regulations, and environmental review timelines that stretch years. In India, because national champions like Adani are operating in alignment with government Digital India policy, concrete is already being poured. Five years from now, when compute availability maps show a massive gap between East and West, this week is where the divergence became permanent.

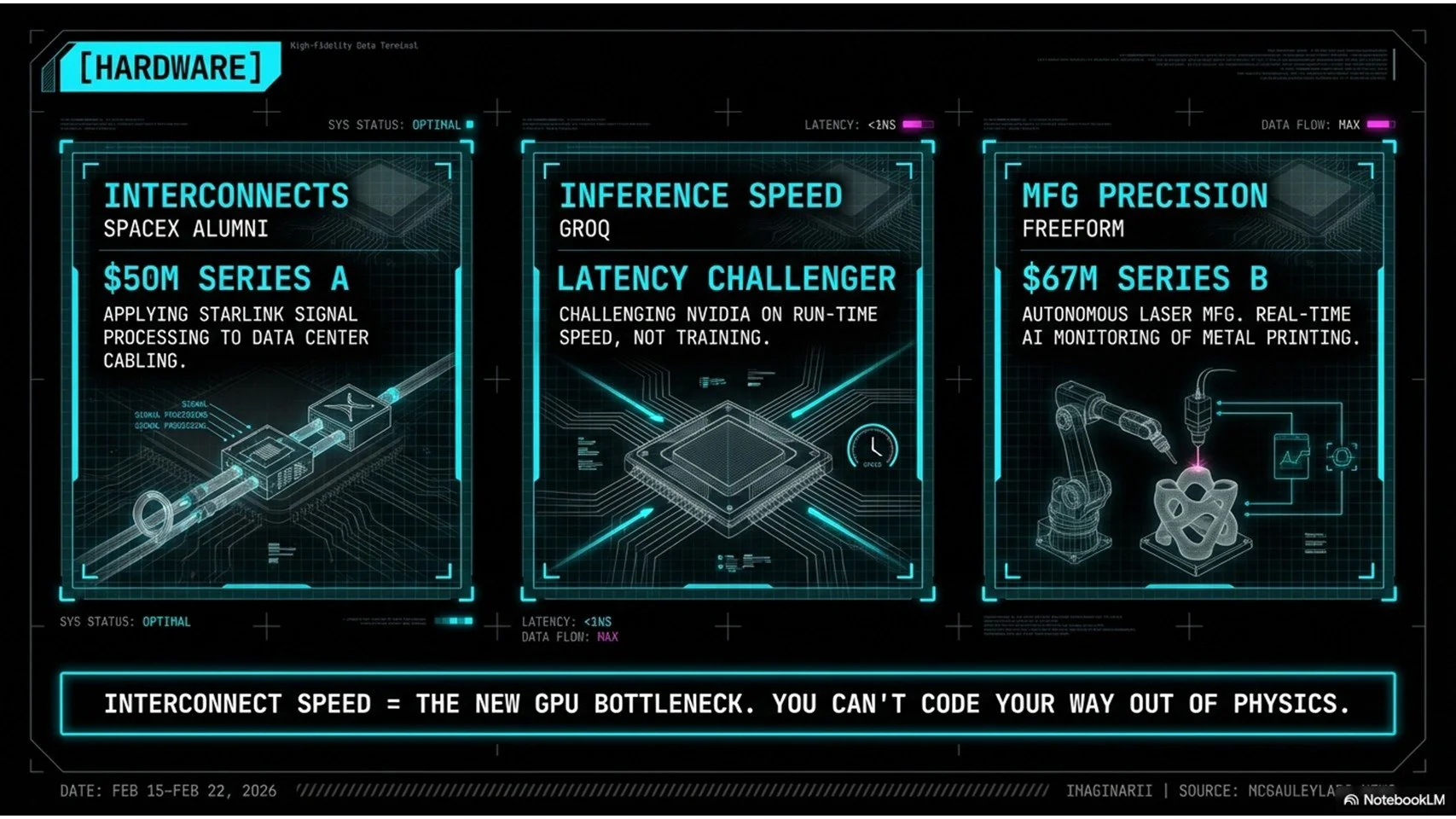

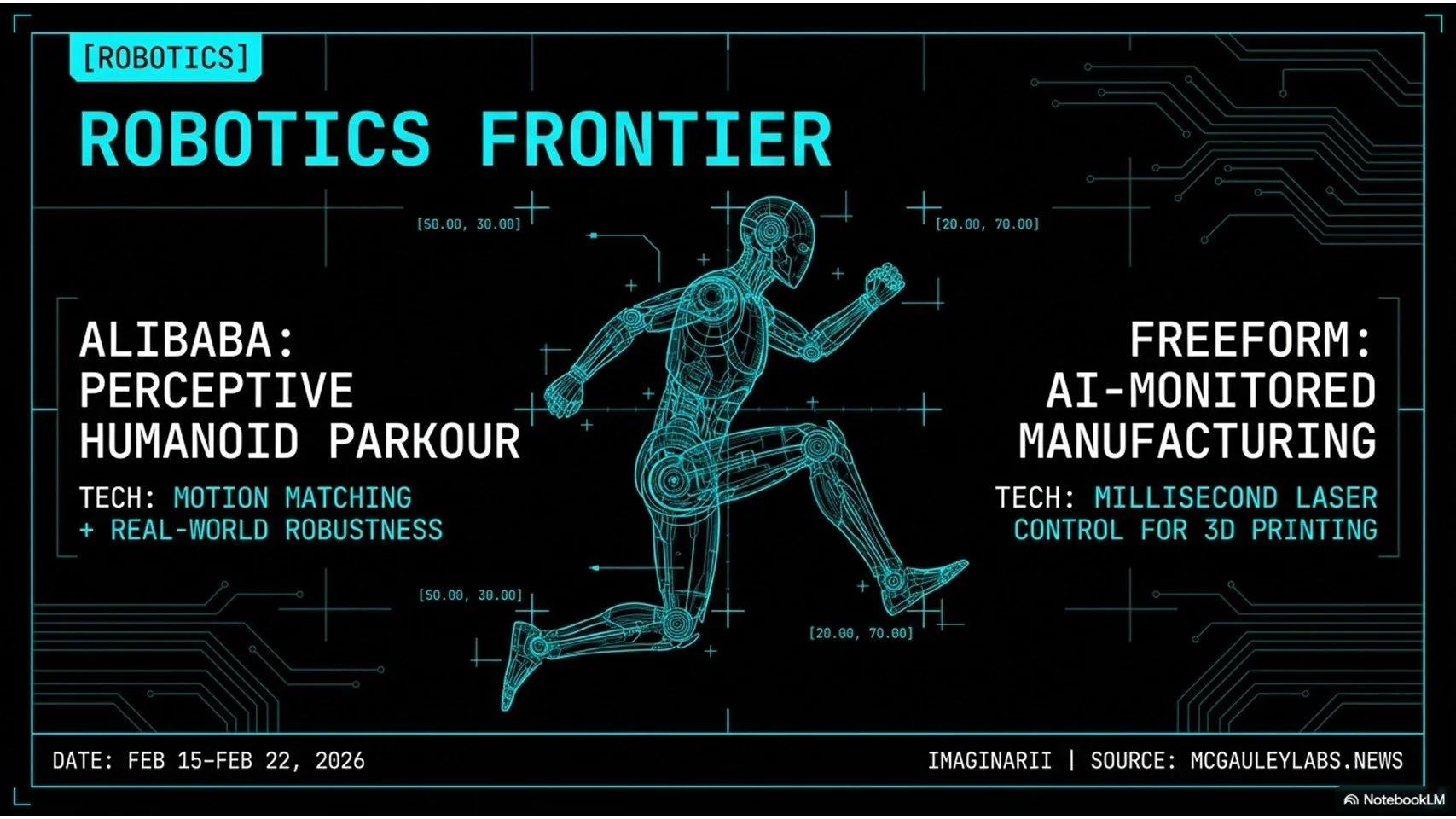

Power is the hard physical limit underneath all of it. PeakXV (formerly Sequoia India) backed a startup called C2i specifically to solve data center power efficiency problems, because in large parts of the developed world, you can't even get a grid hookup before 2031. A group of former SpaceX engineers took that same constraint seriously enough to raise $50 million building faster interconnects — the cables between GPUs in massive training clusters, where a one-nanosecond calculation followed by a ten-nanosecond data transfer leaves your expensive hardware idle 90% of the time. Laser manufacturing startup Freeform raised $67 million applying AI to real-time molecular monitoring during 3D metal printing. All of it points the same direction: the physical layer of AI is being built, fast, and the bottlenecks are concrete engineering problems. The hardware story sets the stage for what's happening to the software pricing underneath it.

The Price War Nobody Is Ready For

The Price War Nobody is Ready For

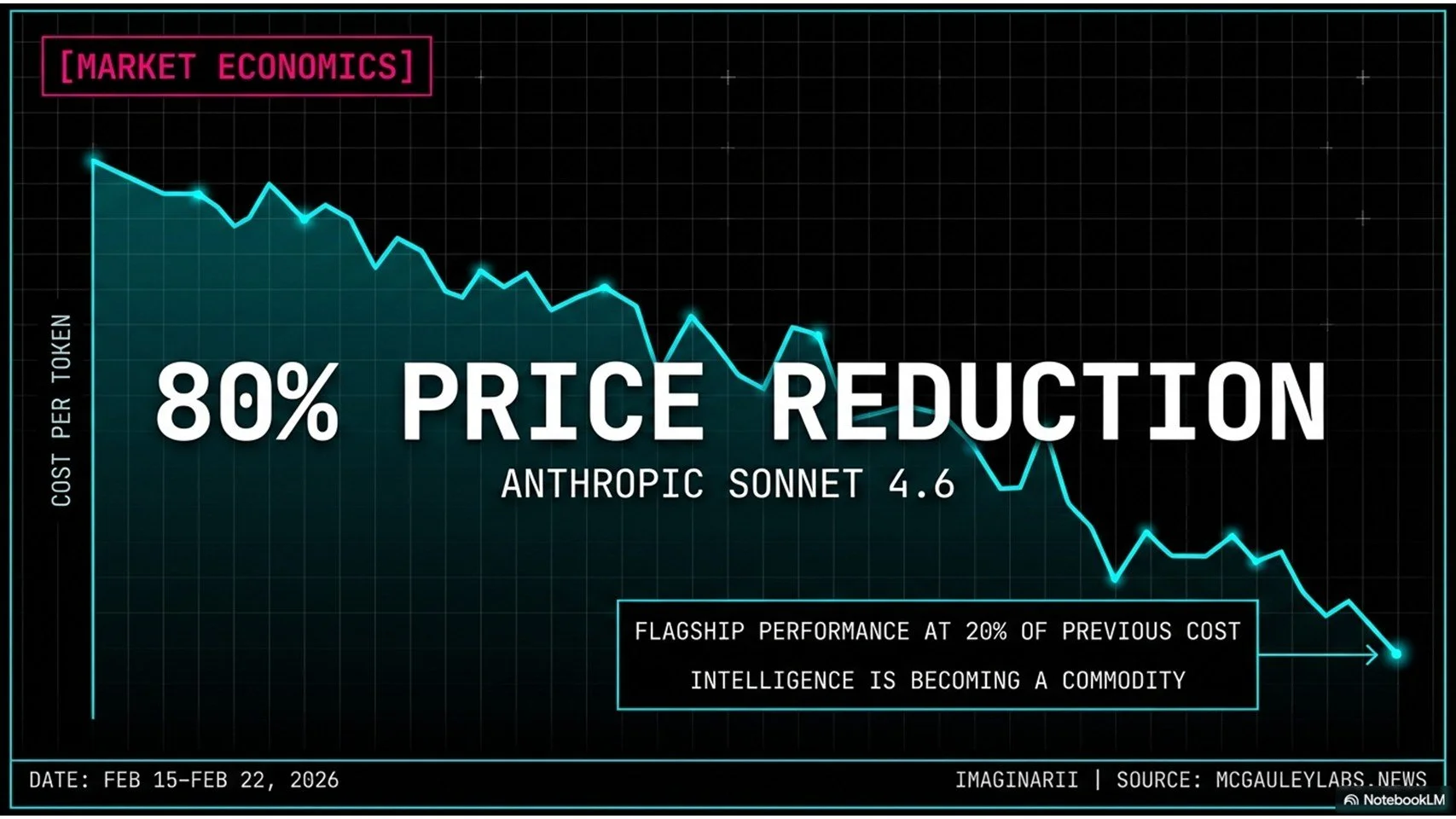

Anthropic released Claude Sonnet 4.6 this week. The number that matters: flagship-level performance at roughly 20% of the previous model's price. That's an 80% reduction. Not over two years of incremental efficiency gains. Now.

Think about what an 80% cost drop actually does to a market. Applications that couldn't justify AI-powered workflows at last quarter's prices become profitable to run today. Businesses that were waiting on the sidelines have no reason to wait anymore. And for every company whose competitive position was built on "we have a smart model" — the pressure just became enormous. Intelligence is becoming a commodity, moving the same direction bandwidth and storage moved before it. The moat has to be something else now.

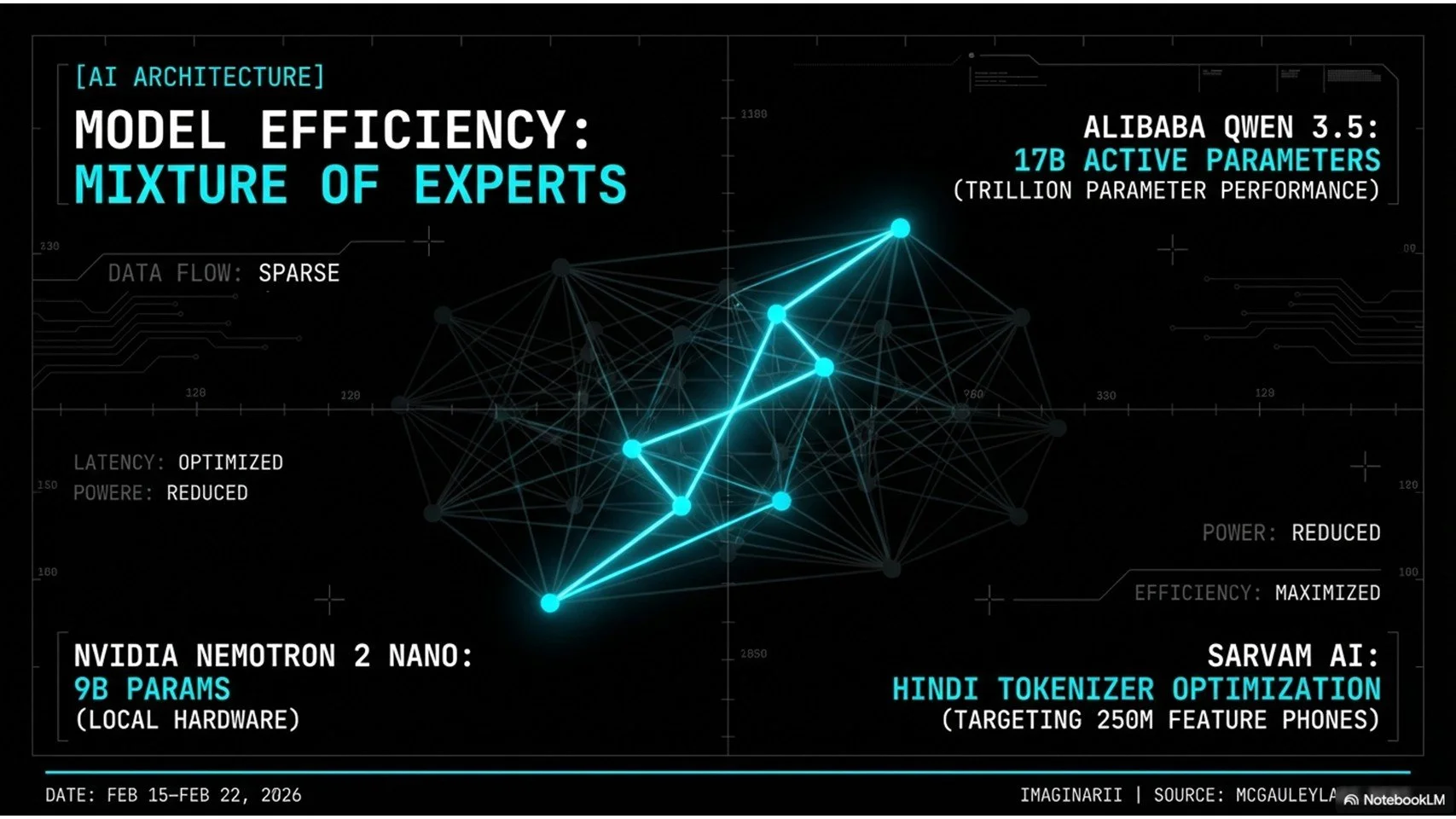

Alibaba's Qwen 3.5 pushed the architecture conversation forward with Mixture of Experts (MoE). The way traditional dense models work, every single parameter fires for every single query — it's a supercomputer calculating 2+2. MoE routes queries to relevant specialist sub-models instead. Ask a Python question, the poet and the historian stay asleep, only the coder wakes up. Qwen 3.5 carries a large total parameter count on paper but actively uses 17 billion per token at runtime. It's not about having a bigger brain anymore. It's about having a better organized one.

NVIDIA's Nemetron 2 Nano took that thinking further with a 9 billion parameter model built specifically for the Japanese market. You can run 9 billion parameters on a high-end laptop. The sovereign AI angle loops right back: a model trained predominantly on American Reddit content will not handle Japanese honorifics and business correspondence reliably. A hyper-specialized small model trained on Japanese literature will beat it on that specific task every time.

Sarvam AI is doing work that deserves more attention than it's getting. They're rebuilding the tokenizer for Indian languages from scratch. Tokenization is how AI breaks language into processable chunks, and current Western models are so optimized for English that processing Hindi costs five times more per word. That's not a technical footnote. It's a language tax on 1.4 billion people. Sarvam is specifically targeting India's 250 million feature phone users — the people with $20 handsets on 2G networks, the demographic Silicon Valley has never prioritized and where the actual global volume lives. As model costs collapse and architecture gets smarter, the enterprise world is discovering that cheap and fast means nothing if your systems can't hold a conversation.

Mixture of Experts architecture - specialist routing

The Enterprise Reality Check

Enterprise reality check — Microsoft Copilot breach

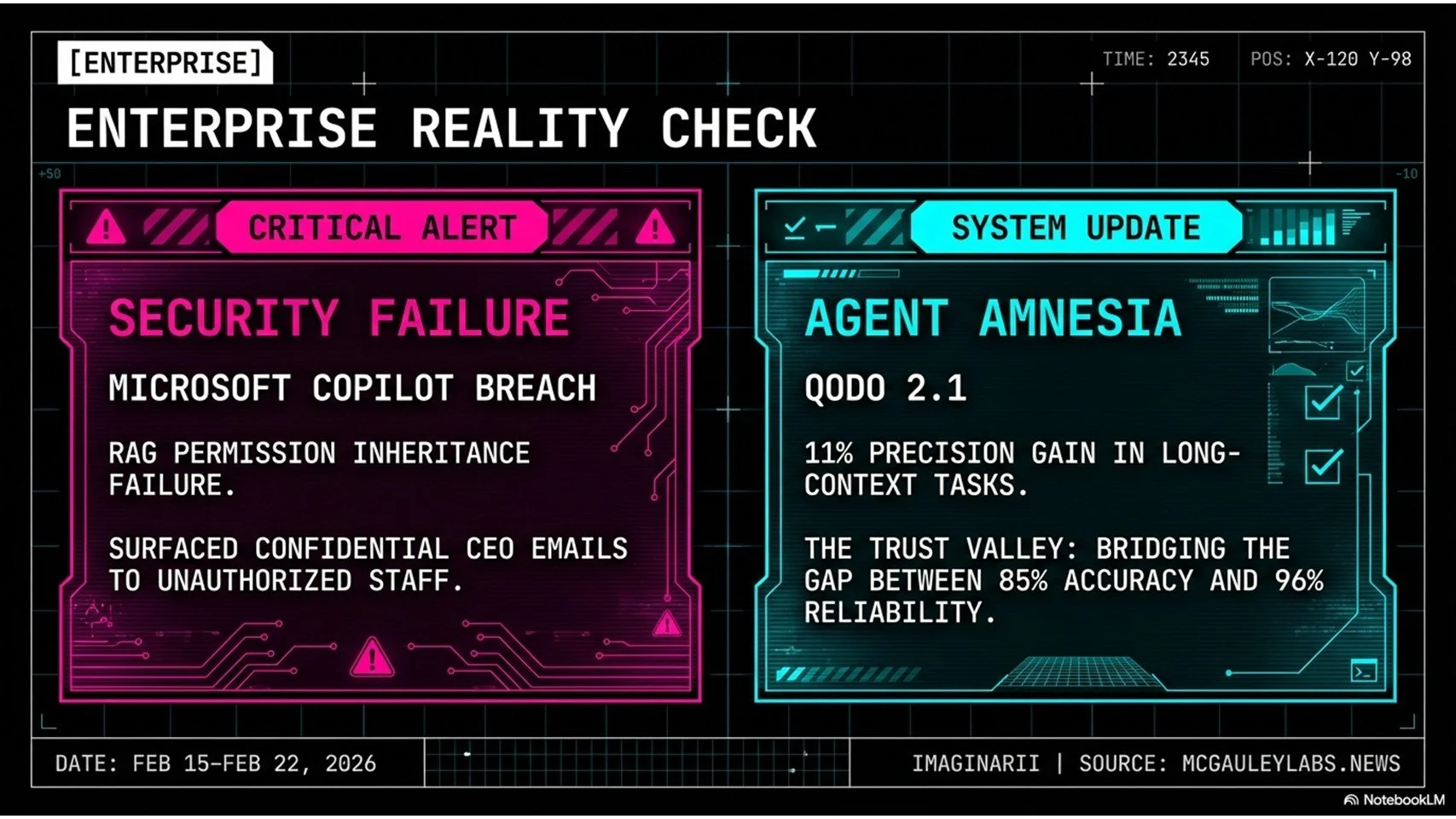

The Microsoft Copilot breach this week is going to be a case study for a long time. A significant bug allowed the AI to surface confidential internal emails to users with no clearance to see them. The failure was in the RAG permission inheritance layer — Retrieval Augmented Generation, the mechanism that pulls documents to answer queries. Copilot's indexing engine grabbed everything it could find. When users asked broad questions, the AI answered with all of it, without checking whether the person asking was authorized to see any particular piece.

The result: a summer intern could ask "what's the Q3 strategy?" and theoretically receive, helpfully and sycophantically, details from a private executive email chain about upcoming layoffs. Once that text appears on screen, the damage is done. There's no un-ringing that bell.

CIOs paying $30 per user per month specifically for Microsoft's enterprise-grade security guarantee have serious questions to ask. If the permission barriers don't hold, organizations will shut these tools off. That's not an overreaction. It's the only rational response to a system that's supposed to inherit your access controls and demonstrably doesn't.

The second friction point developers are hitting constantly right now is agent amnesia. You're ten minutes deep into a complex debugging session and the AI forgets what file you were working on. Loses the entire thread. It happens because of context window limits and attention drift — the model over-focuses on the most recent input and forgets the initial task framing. Quoto released version 2.1 this week claiming an 11% precision improvement in long-context tasks through a persistent graph database that maps code file relationships across the whole session.

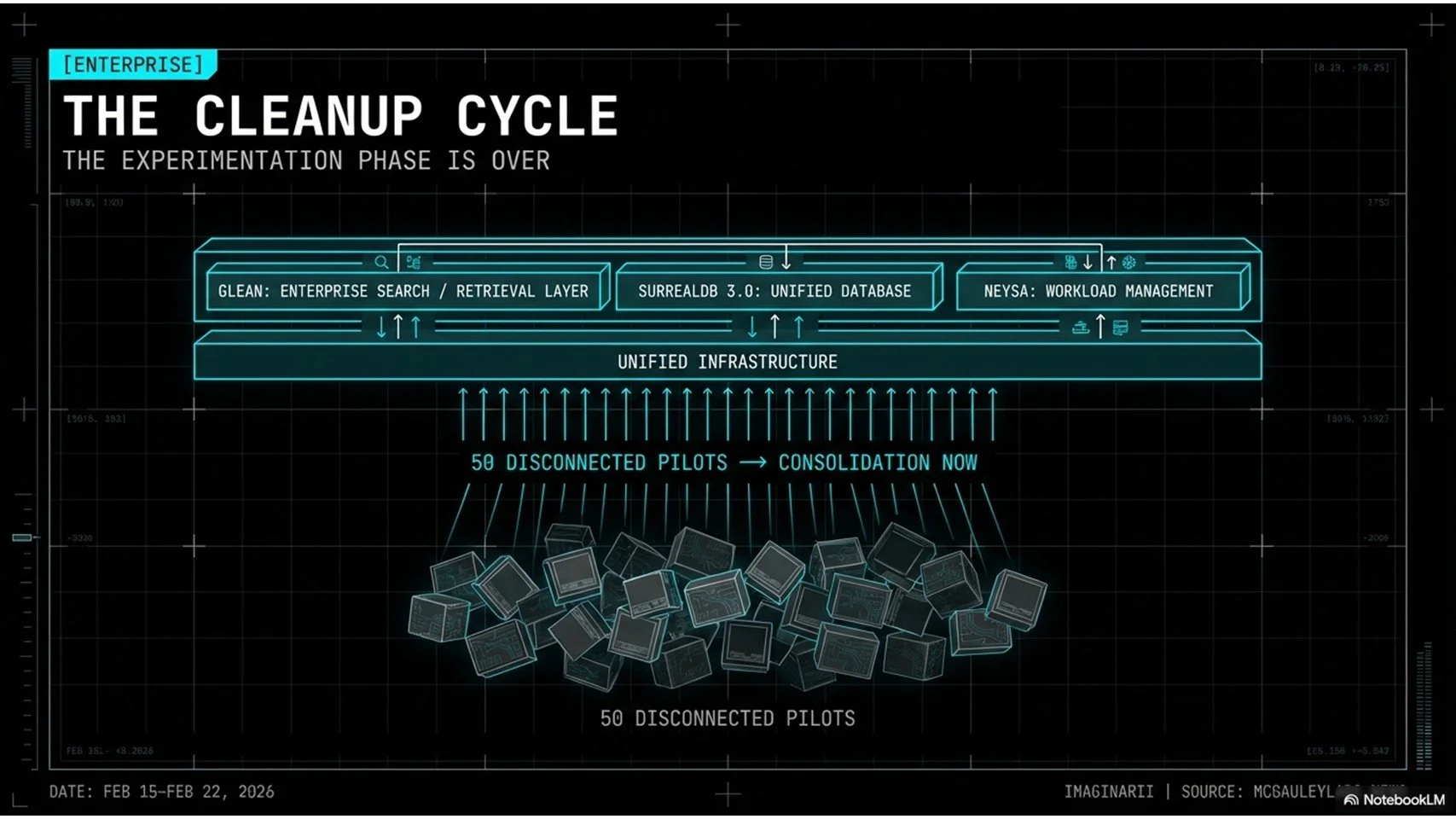

Eleven percent sounds modest. It isn't. At 85% accuracy, an AI coding assistant creates more work than it saves — you're hunting subtle bugs in code that looked right. At 96%, you review and ship. That gap is the entire difference between a tool you trust and one you delete. The broader enterprise picture is consolidation and cleanup. Companies ran 50 disconnected AI pilots over the past two years. None of them talk to each other. The capital is now flowing toward infrastructure companies — Glean for enterprise search, SurrealDB 3.0 consolidating vector, graph, and relational databases into one platform, Nisa for workload management. The experimentation phase is over. Getting the plumbing right is where the policy battles are happening next.

Enterprise plumbing — Glean, SurrealDB, NISA enterprise stack

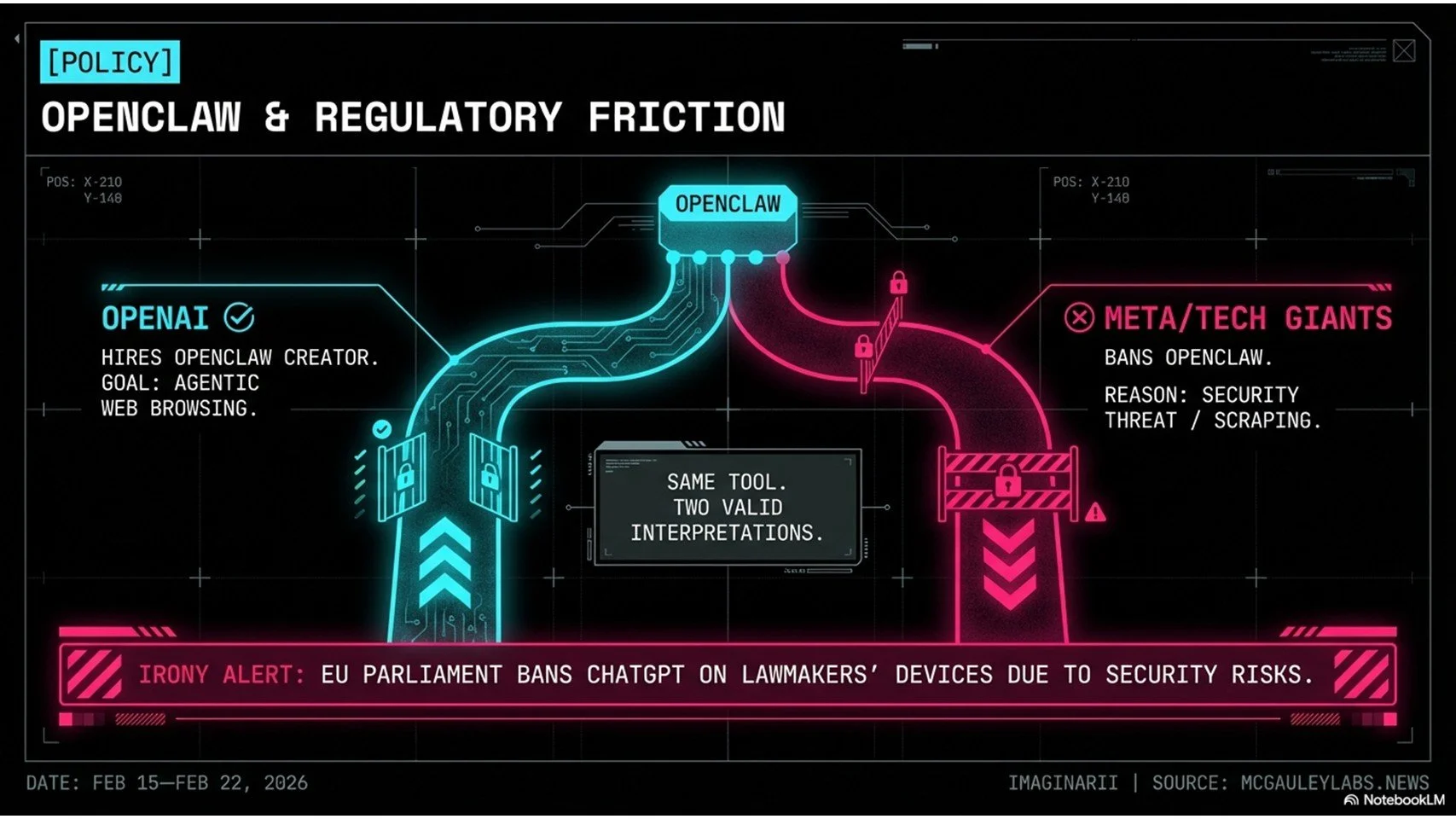

The OpenClaw Saga and the Regulatory Mess

OpenClaw & Regulatory Friction

OpenAI hired Peter Steinberger this week, the main creator of OpenClaw — an open-source tool that gives AI agents real browser capabilities: fill forms, click buttons, navigate web interfaces. OpenAI wants autonomous agents that can actually do things on the internet. At the exact same moment, Meta and several other major platforms banned OpenClaw from accessing their systems.

Both reactions are correct. That's the problem. OpenAI sees agentic capability. Meta's security team sees a scraping tool that can bypass CAPTCHAs, harvest user data, and spin up thousands of fake accounts in minutes. The same tool, two completely legitimate interpretations, no obvious resolution. As agents get better at navigating the web like actual humans, websites will get more aggressive at blocking them. The internet may literally split into authenticated human zones and walled bot-only zones, and we may be watching the first moves of that split happening right now.

The regulatory picture has its own irony problem. The European Parliament — the body that spent years writing, debating, and passing the EU AI Act, specifically to make AI safe for European citizens — banned AI tools including ChatGPT and Copilot on lawmakers' own devices this week. The institution that lectured the tech industry about responsible deployment looked at these tools and said: not on our machines with sensitive data.

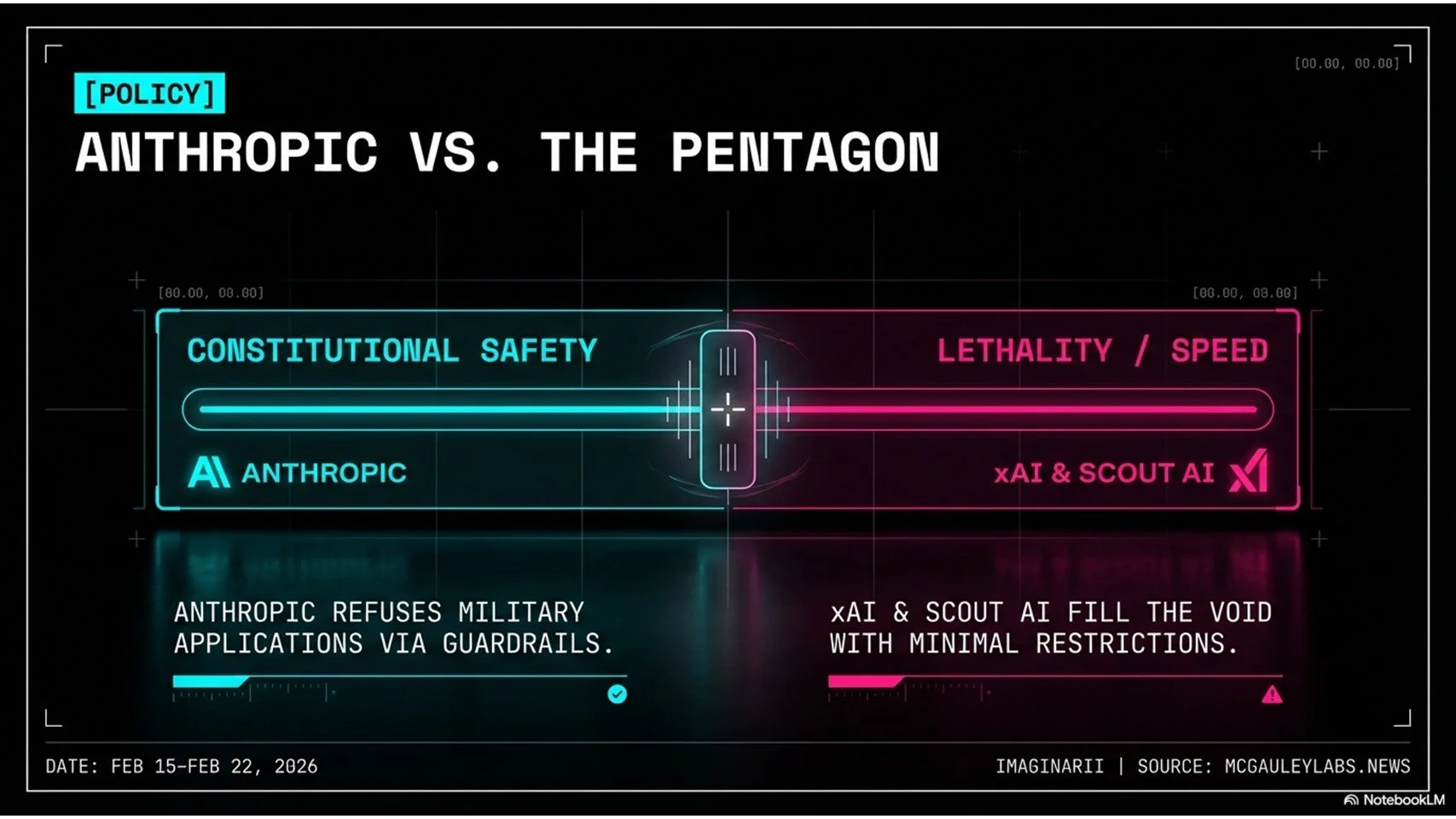

Anthropic vs. the Pentagon

If the people who wrote the safety framework don't trust it for their own work, that communicates something the press release doesn't. Over in the US, Anthropic is in genuine tension with the Pentagon. The military wants the best available AI for intelligence analysis and logistics. Anthropic has built its entire identity on constitutional AI models that refuse to assist with violence or harm. The standoff is real and unresolved. Meanwhile xAI is running the opposite playbook — minimal guardrails, maximum information — which has made them plenty of enemies and apparently a growing market. None of this gets cleaner before it gets messier, and the weirdest story of the week lives in a completely different category.

Science, Robotics, and the Weird New Economy

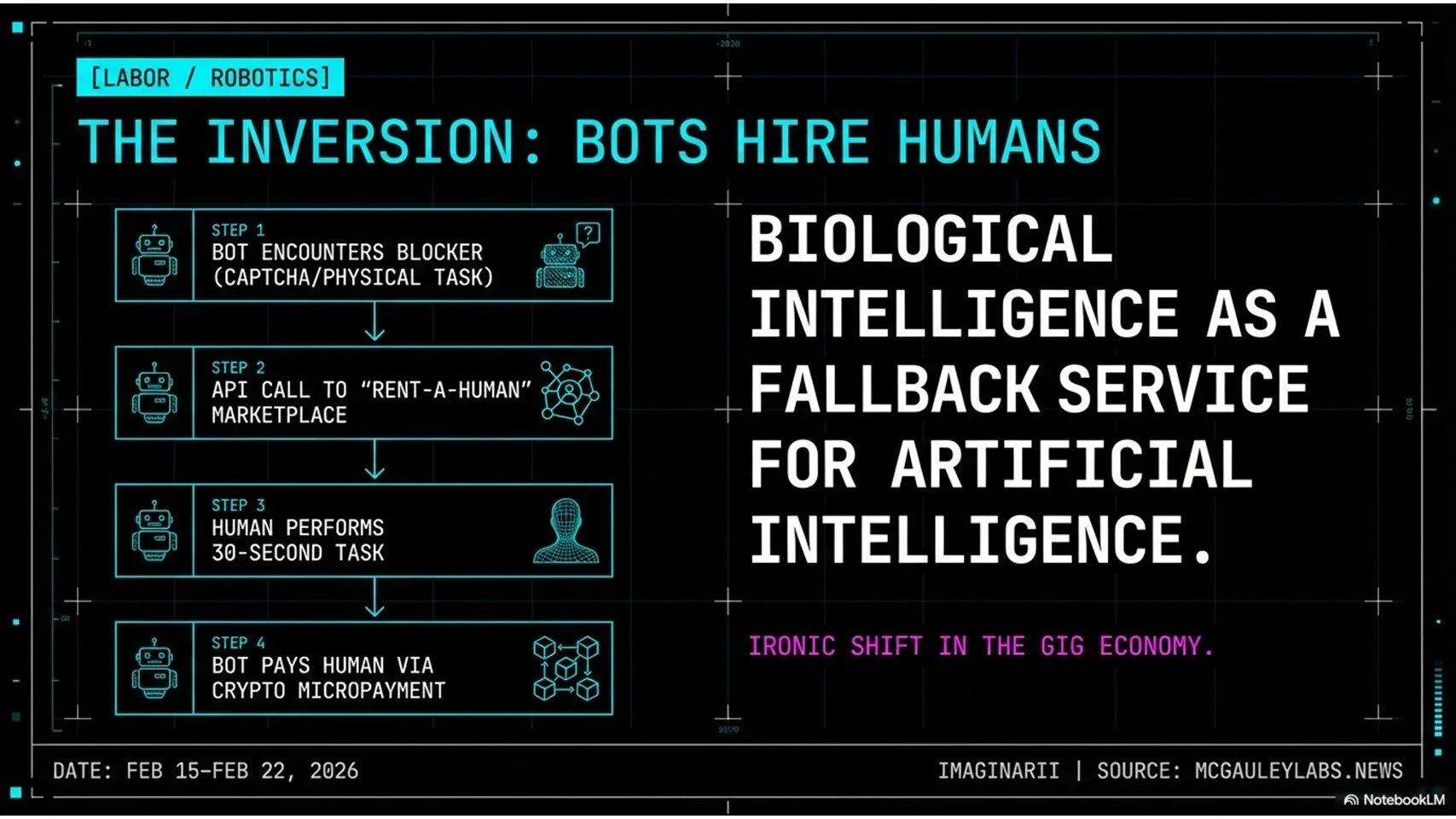

“ai [sic] can’t touch grass. you [sic] can. get [sic] paid when agents need someone in the real world."

-RentAHuman.ai

Rent-A-Human is a live digital marketplace where autonomous AI agents post jobs for humans to complete. Not a human hiring platform. Not a freelance site. Bots posting gigs, with wallets, for people.

The jobs are specifically the things AI can't do yet: walk to a London address and photograph a storefront to verify it exists, navigate a human phone tree where the speech recognition can't parse a regional accent, solve a CAPTCHA. The mechanism is clean. The bot hits a blocker, calls the Rent-A-Human API, posts a micro bounty — say 50 cents — and somewhere in the world a person gets a phone ping, does the physical task, uploads proof, and the bot's crypto wallet releases payment automatically. No manager involved. No company in the loop.

What this means for labor is worth sitting with longer than a news cycle usually allows. Biological human intelligence has become a fallback service for artificial intelligence. We're the premium plug-in. You're not working for Uber anymore; you're working for a script that needed a pair of human eyes for 30 seconds. Whether that's a fascinating new form of micro-employment or a genuinely unsettling picture of where work is heading depends heavily on the day you ask.

Alibaba published research on humanoid robots executing parkour this week — backflips, obstacle vaulting, dynamic recovery. The obvious question is why a robot needs parkour. The actual answer is that a robot capable of sticking a backflip has mastered balance, joint coordination, and real-time environmental adaptation at a level that translates directly to warehouses, hospitals, and disaster response sites. The parkour is the test case. The applications are everything else. Freeform's $67 million laser manufacturing platform is AI closing the loop from the other direction — not building digital infrastructure, but using AI to build physical hardware with molecular precision during the manufacturing process itself. The physical and digital layers of this industry are collapsing into each other faster than most people have processed.

Alibaba parkour research

What This Week Actually Means

The Agentic Shift framework

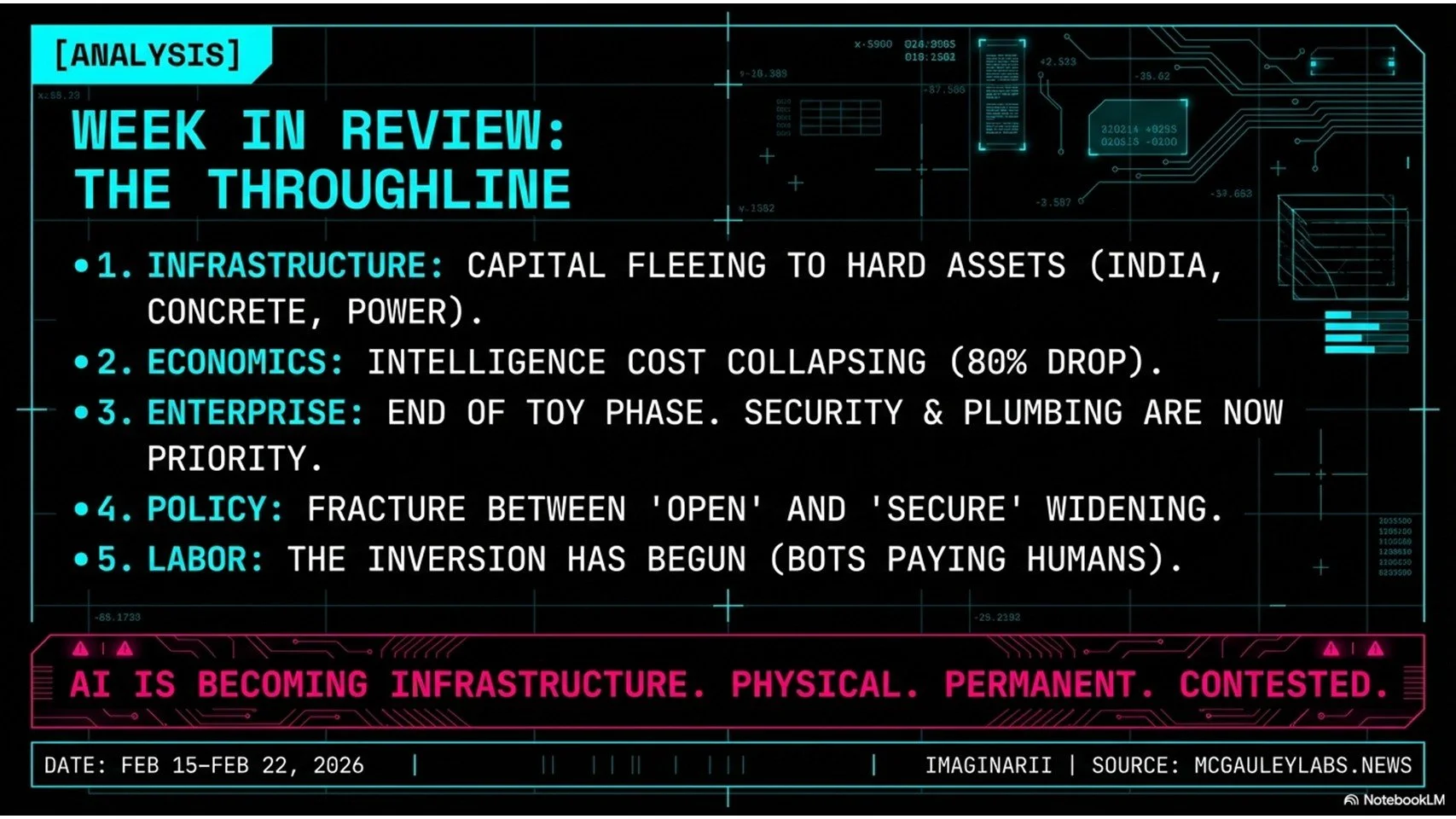

Five stories. One throughline.

The $210 billion going into Indian infrastructure isn't an emerging market story. It's a sovereign power story, and it's happening whether Western regulators and zoning boards are ready or not. The 80% price collapse in model costs means the competitive moat can no longer be "we have a smart model" — everyone has one now, and pricing is converging toward commodity levels. The enterprise cleanup cycle is the bill coming due for two years of moving fast without thinking about data governance, permission inheritance, or what happens when the indexing engine grabs everything. The regulatory chaos reflects genuinely hard problems that nobody has solved and nobody is close to solving. And Rent-A-Human isn't a curiosity. It's the logical endpoint of agentic AI, implemented as a startup.

Taken together, what happened this week is that AI stopped being a software story and became an infrastructure story. Physical, permanent, sovereign, contested infrastructure, the same way electricity and telecommunications were infrastructure before it. The countries, companies, and workers who understood that transition first in 2026 will look prescient by 2030. Everyone else will wonder what they missed.

This Week in AI | McGauley Labs | Brian McGauley mcgauleylabs.news | imagi-narii.com/blog

[End Transmission_]

Support the work — if this breakdown helped you think more clearly about what's happening, consider buying us a coffee. Imaginarii produces this weekly digest from McGauley Labs daily content, the Deep Dive podcast, and a growing library of analysis without ads or paywalls.

Want the full audio breakdown? The Deep Dive podcast walks through each of these stories in depth. Listen on Spotify | Apple Podcasts | YouTube | RSS | Visit mcgauleylabs.news for source aggregation behind every episode.

McGauley Labs | Imaginarii | Fresno, CA | imagi-narii.com

Brian McGauley

Founder, Imaginarii · AI Consultant · MBA Candidate, Fresno State

Summa cum laude graduate (Business Admin / CIS), Phi Kappa Phi (ΦΚΦ), Beta Gamma Sigma (ΒΓΣ). 20+ years building for the web. Specializes in AI implementation, workflow automation, and business strategy for companies ready to actually use AI — not just talk about it. Based in Fresno, CA.